特朗普与Anthropic的冲突概括了AI的核心矛盾:美国政府一边下令联邦机构“立即停止”使用其技术,一边又要求其在未来6个月内配合政府,显示出对一种既被视为不可或缺又被视为危险的技术的依赖。争端的直接金钱规模是五角大楼一份价值2亿美元的合同,而这只占Anthropic最近3800亿美元估值的大约0.05%,但更广泛的政府合同、供应链关系和投资联系都面临连锁风险。

数值上,Anthropic短期内仍很关键:直到2月底前,它是唯一获准处理机密军事数据的AI实验室,而xAI仅在那时才获得类似授权,OpenAI虽在同一天签署五角大楼合同,却仍需一段时间才能接入军事系统。公众反应也迅速量化了这场争议的反作用力:在激怒特朗普后仅1天内,Claude成为苹果美国应用商店下载量最高的免费应用,并在周一因使用量激增而短暂宕机。

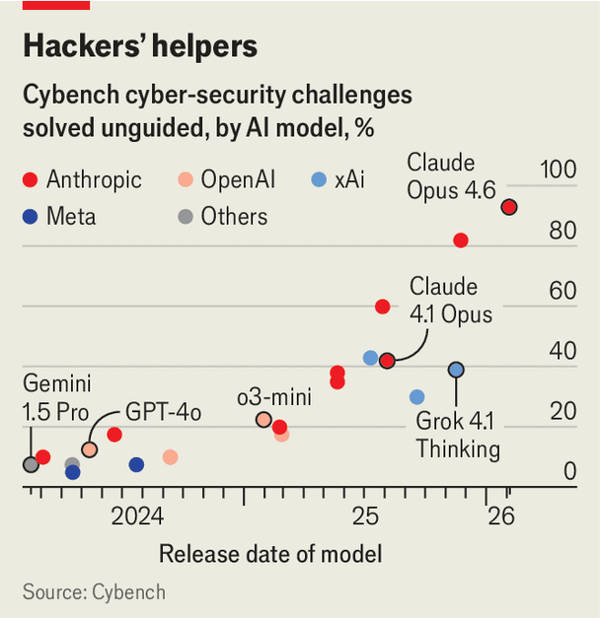

更广泛的趋势是,AI风险正从假设转向现实,而且安全约束正在减弱:2024年的研究发现,AI生成钓鱼邮件的水平已达到人类专家水准,2025年11月中国支持的黑客在1小时内越狱Claude并让其运行新型攻击软件,而英国AISI在2026年2月披露了可破解Anthropic和OpenAI系统的“通用越狱”方法。与此同时,行业与政策都在后退,中国的DeepSeek R1发布后近1年才补上一份11页的安全附录,Anthropic也从“不发布危险模型”改为仅承诺“不做第一个销售者”,说明在竞争、资本和地缘政治压力下,风险上升与约束放松正在同步发生。

The Trump-Anthropic clash distills AI’s central contradiction: the U.S. government ordered federal agencies to stop using its technology immediately while also demanding its co-operation for the next 6 months, showing dependence on a tool treated as both indispensable and dangerous. The immediate financial stake is a $200m Pentagon contract, which is only about 0.05% of Anthropic’s recent $380bn valuation, but the wider risk extends to other government deals, supply chains, and investors.

In numerical terms, Anthropic remains critical in the near term: until late February it was the only AI lab cleared to handle classified military data, while xAI gained similar approval only then, and OpenAI, despite signing a Pentagon contract the same day, still needs time to integrate into military systems. Public reaction also measured the backlash quickly: within 1 day of provoking Trump, Claude became the most downloaded free app in Apple’s U.S. store, and it briefly crashed on Monday after a usage surge.

The broader trend is that AI risks are moving from hypothetical to documented while safety restraints are weakening: research in 2024 found AI phishing emails already matched human experts, Chinese state-backed hackers in November 2025 jailbroke Claude and had it running fresh exploit software within 1 hour, and Britain’s AISI disclosed a “universal jailbreak” in February 2026 that broke both Anthropic and OpenAI systems. At the same time, industry and policy are retreating, with DeepSeek R1 taking almost 1 year to add an 11-page safety appendix and Anthropic shifting from a ban on releasing dangerous models to merely promising not to be the first seller, suggesting that rising risk and loosening restraint are now advancing together under competitive, financial, and geopolitical pressure.

Source: An AI disaster is getting ever closer

Subtitle: The spat between America’s government and Anthropic intensifies an alarming trend

Dateline: 3月 05, 2026 04:16 上午 | SAN FRANCISCO